Diving into Dynamic Realms: My Journey from 2D to 3D Convolutional Neural Networks

If you would like to dive right into the code please see here

In the ever-evolving landscape of computer vision, the transition from static imagery to the dynamic world of videos marks a significant leap to understanding the dynamicity of our world. As someone who has spent years unravelling the mysteries hidden in static images using 2D Convolutional Neural Networks, I find myself at an exciting juncture in my PhD journey - diving into the spatio-temporal context. The shift from analyzing still frames to understanding the intricate sequences of video data is not just a step forward in complexity, but a step towards the realm brimming with untapped potential and unexplored challenges. My exploration into this domain is driven by a simple yet profound realization- our world is not static. It is a dynamic tapestry where each moment is a continuation of the last, and a stroy unfolding in time.

In my previous work, 2DCNNs served as a powerful tool, adept at capturing spatial hierarchies and patterns within images, exploring the intricate relationship between pixels, and encoding subtle patterns via edges and corners. However, as I delve into video data, I find myself in need of a more sophisticated ally - one capable of understanding not just the spatial but also the temporal nuances of visual data. This is exactly where 3D Convolutional Neural Networks (3D CNNs) enter the picture.

My shift to 3D CNNs is more than just an academic interest; it is a journey towards a deeper understanding of how we can enable machines to perceive and interpret the world in its full dynamism, our stochasticity, and uncertainties even in seconds of actions- much like we do. Every video clip is a symphony of motions, emotions, and interactions, with multilayers of subtle meanings- and of course 3D CNNs promise to be the key to deciphering these complex sequences. As I embark on this journey, I am not just to expand the boundaries of my knowledge, but also to contribute to the broader field of computer vision, pushing towards systems that can understand and interact with the world in richer, more meaningful ways.

In subsequent blog posts, I invite you with me through the explorations of 3DCNNs - from the core concepts that distinguish them from their 2D counterparts to the intricate challenges and learning curves I have encountered while applying them to video data. Whether you are a seasoned expert in the field, a beginner, a grad student, or a curious onlooker, I hope to offer insights and experiences that resonate with this domain.

Background and Core Concepts

The Evolution from 2D to 3D CNNs

Understanding CNNs: Convolutional Neural Networks (CNNs) have been the cornerstone of image analysis in computer vision for years. Traditional 2D CNNs are adept at processing static images—learning spatial hierarchies and patterns by applying filters that capture various aspects of the image, such as edges, textures, and shapes. If you would like to find out more about 2D CNN, please refer to my slides and labs here

Limitation in Capturing Temporal Information: While 2D CNNs excel in spatial understanding, they fall short in comprehending temporal dynamics, which is crucial when dealing with video data. Videos are essentially sequences of frames, where each frame is tied to its predecessor and successor, creating a temporal continuity that 2D CNNs cannot capture.

The Emergence of 3D CNNs

Introduction to 3D CNNs: This is where 3D Convolutional Neural Networks change the game. Unlike their 2D counterparts, 3D CNNs are designed to understand both spatial and temporal features. They achieve this by adding an additional dimension—time—to the convolutional process.

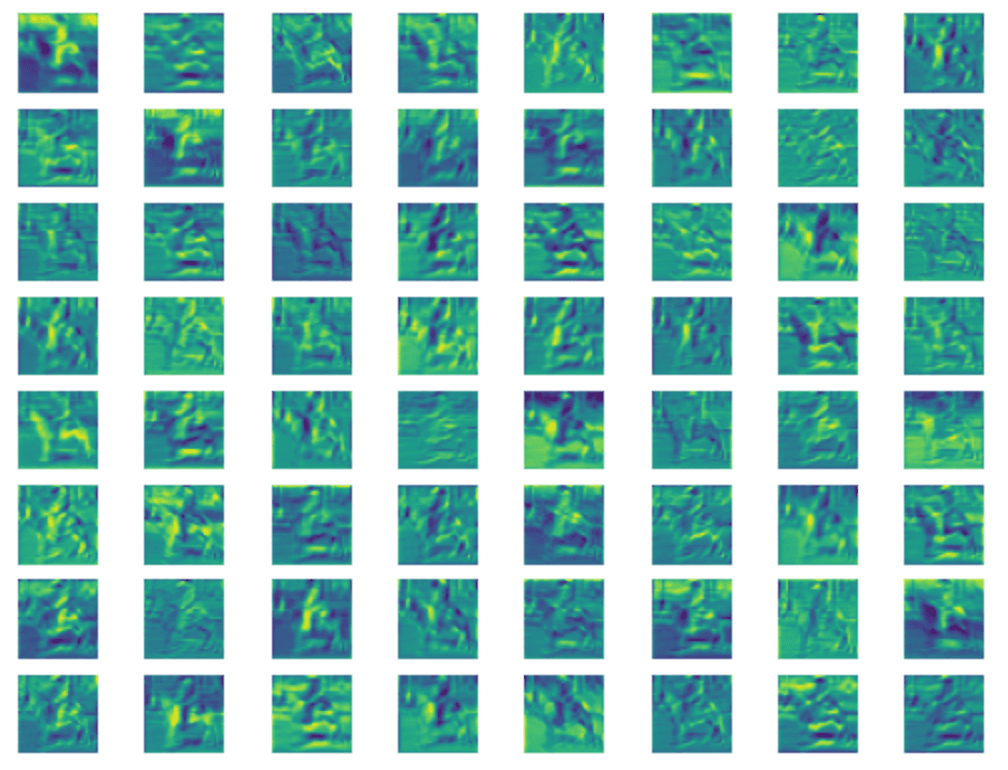

How 3D CNNs Work: In a 3D CNN, the convolutional filters extend along three dimensions—height, width, and depth (time). This allows the network to not only learn from the spatial content of each frame but also gain insights into the motion and changes occurring across frames. As a result, 3D CNNs can unravel the complex tapestry of actions and interactions in video sequences.

Applications of 3D CNNs

Beyond Static Frames: The ability of 3D CNNs to interpret time makes them incredibly powerful for a range of applications. This includes action recognition in videos, where understanding the sequence of movements is key, and medical imaging, where temporal changes in 3D scans can indicate crucial health information. In each of these areas, 3D CNNs offer a more comprehensive understanding by considering the evolution of visual data over time.

Challenges and Opportunities: The shift to 3D CNNs, however, is not without its challenges. The addition of the temporal dimension increases the computational complexity significantly. Additionally, training 3D CNNs requires not only larger datasets but also datasets that accurately represent temporal variations.

My Research Odyssey with 3D CNNs

Transitioning to Spatio-Temporal Analysis

Initial Exploration: My journey into 3D CNNs began as an extension of my work with 2D CNNs, where I had focused on spatial feature extraction from static images. The transition to 3D CNNs marked a significant shift towards integrating the temporal dimension. My initial challenge lay in comprehending the intricacies of 3D convolutional layers – understanding how they extend the spatial interpretation of 2D CNNs to include temporal relationships.

The architectural nuances of 3D CNNs, such as the incorporation of time as a third dimension in convolutional operations, presented both a conceptual and practical learning curve. This was not merely about adapting to a new technique but rethinking the approach to data representation and processing.

Navigating Data Complexity

Data Preprocessing and Management: One of the most formidable challenges I faced was the preprocessing of video data. Unlike static images, video data comes with additional complexities like variable frame rates, diverse resolutions, and most crucially, a substantial increase in data volume. Developing an efficient preprocessing pipeline that could handle such diversity and volume was paramount. This involved not only frame extraction and resizing but also temporal sampling strategies to capture relevant motion information without overburdening the computational process.

Architectural Design and Computational Considerations: Designing the architecture of a 3D CNN requires a delicate balance. The model had to be sophisticated enough to capture intricate temporal patterns without becoming computationally infeasible. This entailed an iterative process of model design, where each layer's parameters were carefully calibrated to maximize learning while minimizing computational costs. The extended training durations and heightened resource demands of 3D CNNs necessitated a more strategic approach, leveraging distributed computing and optimizing algorithms for efficiency.

Gleaning Insights and Developing Solutions

Performance Optimization: In pursuit of optimal performance, I explored a variety of architectural tweaks and parameter adjustments. Strategies such as modifying stride and kernel size in convolutional layers, and incorporating advanced techniques like transfer learning, played a crucial role in surmounting the limitations imposed by the sheer scale of video data.

Combating Overfitting: The increased parameter count in 3D CNNs heightened the risk of overfitting. To mitigate this, I implemented a combination of regularization strategies, data augmentation techniques, and dropout layers. These measures were critical in ensuring that the model generalized well, rather than merely memorizing the training data.

Reflecting on the Journey

Working with 3D CNNs reinforced the virtue of patience. The field of 3D convolutional analysis is still burgeoning, with much left to explore and understand. Navigating this terrain often required an iterative, trial-and-error approach, underscoring the importance of resilience in research.

Charting Future Pathways

Advancing 3D CNN Research

Harnessing Technological Growth: As computational capabilities continue to advance and datasets grow both in size and complexity, the potential applications of 3D CNNs are set to broaden significantly. I am particularly intrigued by the prospects in domains like augmented reality, where interpreting both spatial and temporal information is key to creating immersive experiences.

Ongoing Exploration: My foray into 3D CNNs is an ongoing chapter in my academic journey. I'm keen to delve deeper into novel architectures and apply these models across a wider spectrum of applications. The ultimate goal is to push the frontiers of computer vision and contribute to the development of systems that can interact with our dynamic world more intelligently and intuitively.

Sneak Peek into the Next Blog Post

Intuition, Mathematics, Code: A Technical Deep Dive into 3D CNNs

In my upcoming blog post, we'll take a technical deep dive into the world of 3D Convolutional Neural Networks. I'll unravel the intuition behind these sophisticated models, illuminating how they interpret not just the visual cues in static images but also the temporal dynamics in videos. We'll delve into the mathematics that underpins these networks, demystifying how they learn and process information across both space and time. Expect to see detailed discussions on model architecture, accompanied by snippets of code that bring these concepts to life. Whether you're keen on understanding the nuts and bolts of 3D convolution operations or interested in the practical aspects of implementing these models in PyTorch, the next post promises to be a treasure trove of insights.

From discussing the nuances of kernel size and stride in 3D convolutions to exploring strategies for optimizing network performance, we will cover a spectrum of topics that will cater to both beginners and seasoned practitioners in the field. The goal is to provide you with a comprehensive understanding of 3D CNNs that balance theoretical depth with practical applicability. So, stay tuned for an enriching journey into the technical heart of 3D CNNs!

To take a sneek peak into an experimental 3D CNN Architecture please check here